The EU AI Act is now in force. If you are building or deploying AI products in the European market, compliance is no longer a side note. It is a product and growth requirement.

The hardest part for startups is not ambition. It is ambiguity. Teams lose momentum trying to decode legal text instead of shipping features. That is why we built the AI Act Risk Classification Tool inside ComplianceRadar.dev: to turn legal uncertainty into a practical, fast first answer.

The Core Problem: Annex III Is Hard to Interpret Quickly

The AI Act classifies systems as Unacceptable, High-Risk, Limited-Risk, or Minimal-Risk. For most SaaS and product teams, the real danger sits in Annex III, where High-Risk use cases are defined.

If your AI touches hiring, credit, healthcare, critical infrastructure, education, or law enforcement scenarios, your obligations can increase sharply. A wrong assumption can trigger costly rework, delayed launches, or severe regulatory exposure.

How the Classifier Works in the App

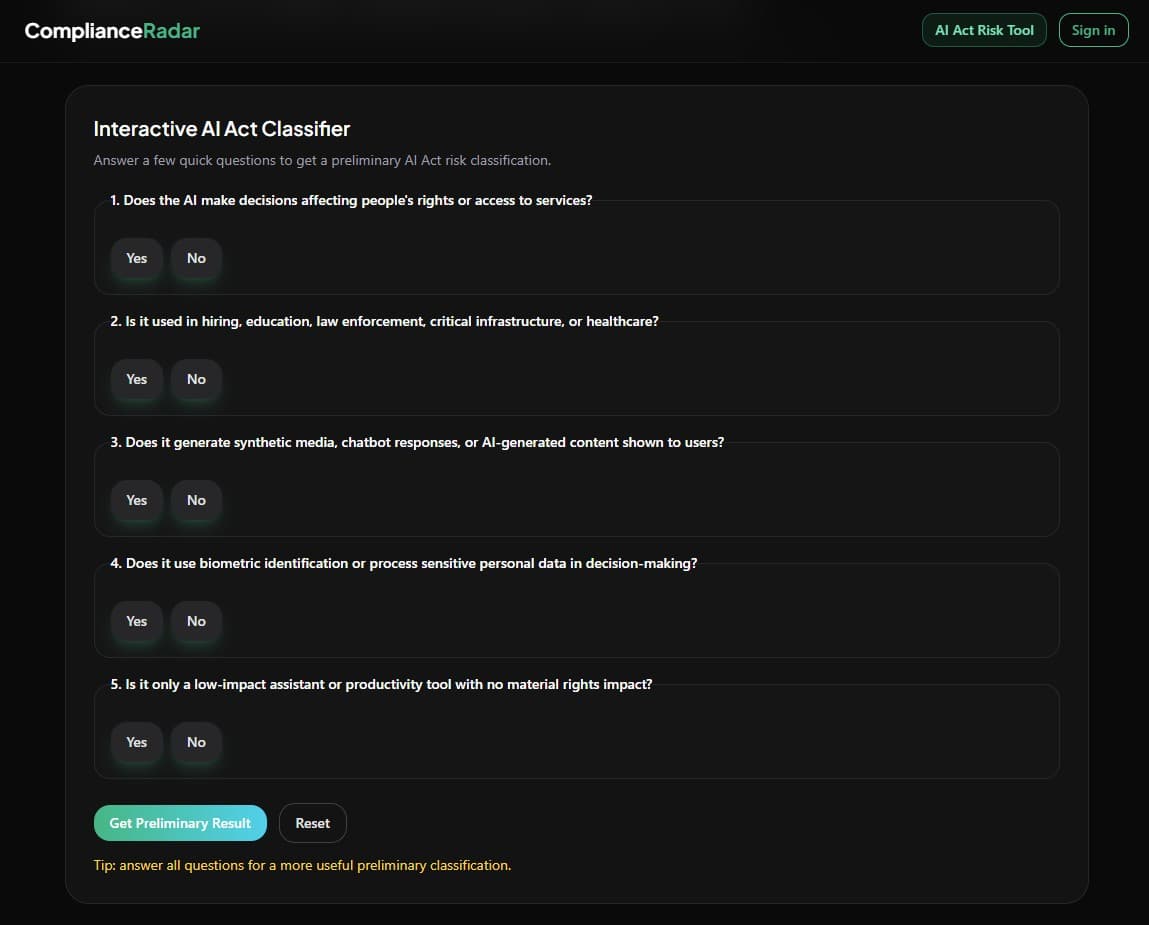

We translated the legal logic into a streamlined product flow. The widget asks clear Yes/No questions about decision impact, sector context, synthetic content, biometric processing, and rights impact. In under two minutes, you get a preliminary risk direction with next-step context.

This is not a replacement for legal counsel. It is the fastest way for product, engineering, and founder teams to align early before spending weeks on assumptions.

What You Get from a Preliminary Classification

Minimal Risk

Most low-impact assistive systems remain in this category. You can keep shipping while maintaining core governance hygiene.

Limited Risk

If users interact directly with AI outputs, transparency obligations apply. Your UX must clearly signal AI interaction and generated content.

High-Risk or Prohibited

If your use case maps to Annex III or prohibited practices, the classifier flags it early so you can stop guessing and move into a stricter compliance track.

Compliance as a Product Advantage

In EU B2B sales, procurement and legal teams now ask risk-tier questions much earlier. Being able to explain your likely classification and next steps builds trust, shortens cycles, and helps protect runway.

Try the tool in your workflow

Run a preliminary classification first, then move to a fuller scan for deeper compliance guidance.

Sources and references

We design content and tooling around primary legal sources and official implementation guidance so teams can map product decisions to verifiable references.

- Regulation (EU) 2024/1689 (EU AI Act) - EUR-Lex

- European Commission - Regulatory framework for AI

- ComplianceRadar.dev - AI Act Risk Classification Tool

- Related reading - EU AI Act explained for startups and developers

Disclaimer: This article and tool provide informational guidance for product teams and do not constitute formal legal advice.