If you are building an AI feature today—whether it's an OpenAI wrapper, an automated resume parser, or a customer service chatbot—and you have users in the European Union, the game has officially changed.

The EU AI Act is now legally in force, with full enforcement hitting in August 2026.

While enterprise companies are hiring armies of compliance officers and paying 300€/hour for legal consultants, indie hackers, startups, and agile product teams are largely left in the dark, wondering: "Does this law actually apply to my app?"

The short answer is: Yes. And the fines for getting it wrong are terrifying.

Here is the no-nonsense, developer-friendly breakdown of what the EU AI Act is, how it affects your SaaS, and how you can avoid the 35 million EUR fines without hiring a law firm.

What is the EU AI Act?

Simply put, the EU AI Act is the world's first comprehensive legal framework for Artificial Intelligence. Think of it as the GDPR for AI, but instead of focusing just on personal data, it focuses on product safety and human fundamental rights.

Unlike GDPR, which applies uniformly to almost all personal data processing, the AI Act takes a Risk-Based Approach. The rules you have to follow depend entirely on how dangerous the EU considers your specific AI use case.

The 4 Risk Categories (Where do you fit?)

The law divides all AI systems into four tiers. Figuring out which tier your app falls into is step one of compliance.

1. Unacceptable Risk (Banned)

These are AI systems that the EU considers a threat to human safety or rights.

Examples: Social scoring systems (like in China), real-time biometric surveillance in public spaces, or AI designed to manipulate human behavior.

Your Action: Don't build this. It's illegal in the EU.

2. High-Risk (Strictly Regulated)

This is where most B2B startups accidentally find themselves. If your AI makes decisions that significantly impact people's lives, you are high-risk.

Examples: AI for CV screening/hiring (HR tech), credit scoring (Fintech), medical devices, or educational grading.

Your Action: You need robust Risk Management systems, human oversight features, continuous logging, and a massive amount of technical documentation (Annex IV).

3. Limited Risk (Transparency Required)

If your AI interacts directly with humans or generates content, you are likely in this tier.

Examples: Customer support chatbots, deepfake generators, or AI-generated text/images.

Your Action: You must comply with Article 52 (Transparency). You need to explicitly tell the user they are interacting with an AI or that the content is AI-generated. No pretending your bot is a real human named "Sarah."

4. Minimal Risk (No Mandatory Obligations)

The vast majority of basic AI tools fall here.

Examples: AI used for basic inventory management, spam filters, or AI in video games.

Your Action: You are generally free to operate, though the EU encourages voluntary codes of conduct.

The Problem: The "Consultant Trap"

The fines for non-compliance are devastating: Up to 35 million EUR or 7% of global annual turnover, whichever is higher.

Because the stakes are so high, a massive industry of "AI Compliance Consultants" has popped up overnight. They will gladly charge your startup thousands of dollars to read the 150-page legal PDF and tell you what risk tier you probably belong to.

For a solo developer or a bootstrapped startup, paying $5,000 for a compliance audit before you even have Product-Market Fit is out of the question.

The Solution: Automated Compliance Scanning

This exact frustration is why we built ComplianceRadar.dev.

We spent weeks tearing apart the dense legal jargon of the EU AI Act and translating it into an executable decision tree. Instead of paying a lawyer, you can now get your compliance status instantly.

Here is how ComplianceRadar.dev helps you stay legal:

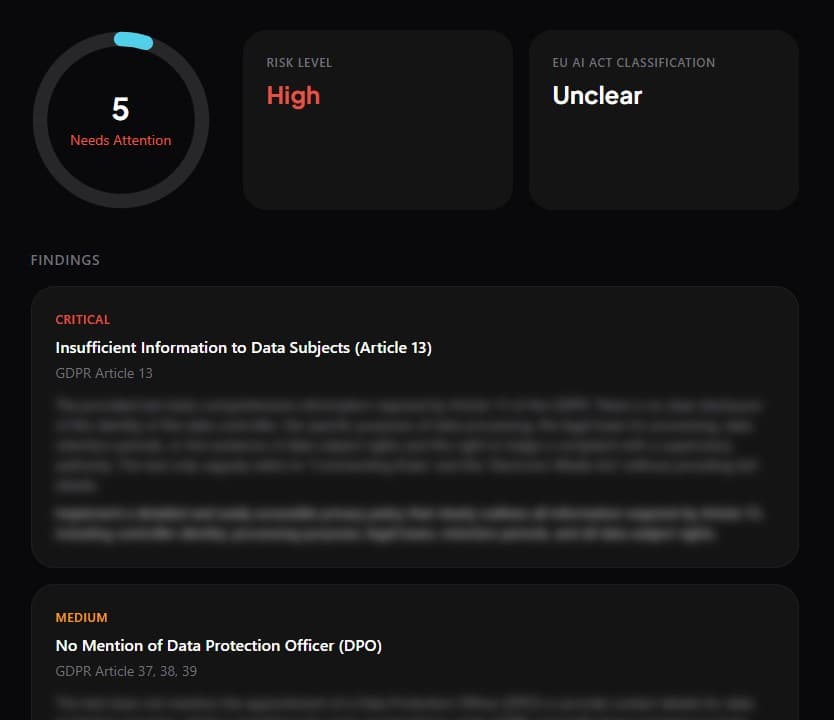

- Instant Risk Classification: Paste your website URL or describe your AI feature. In 15 seconds, our engine analyzes your public-facing use case and categorizes you into one of the four risk tiers.

- Actionable Developer Checklists: Instead of giving you 50 pages of legal theory, we give you a precise, technical to-do list. (e.g., "Add a disclaimer label to your chat UI to satisfy Article 52").

- Zero Code Access Required: We don't need your API keys, and we don't look at your backend code. We assess the public footprint of your product, just like an auditor would.

Don't Wait for August 2026

If you are selling SaaS to European customers, enterprise clients will soon start sending you AI compliance questionnaires. If you can't confidently state your risk tier and show your documentation, you will lose the deal.

Stop guessing. Find out exactly where your product stands today.

Run your preliminary risk check

Know your tier first, then prioritize the right compliance controls and documentation.

Sources and references

- Regulation (EU) 2024/1689 (EU AI Act) - Official Journal (EUR-Lex)

- European Commission: EU AI Act policy page

- Regulation (EU) 2016/679 (GDPR) - Official Journal (EUR-Lex)

This article provides informational guidance and does not constitute legal advice.