In B2B SaaS, trust is your most valuable currency.

With the arrival of the EU AI Act, that trust is no longer built only through a Privacy Policy or Terms of Service.

It now depends on something new: how transparent you are about your AI system.

The Problem: AI Is Now a Compliance Surface

Most founders focus on product features, performance, and scalability.

But overlook a critical layer: AI transparency.

If your product uses AI and serves EU users, your system is now part of a regulatory framework.

That means your users and especially enterprise clients will ask: What model are you using? What happens to our data? Can we trust your outputs?

If you do not answer these questions clearly, you introduce friction in every sales conversation.

Why We Built an AI Transparency Page

At ComplianceRadar.dev, our mission is helping companies identify regulatory risks.

But before launching, we asked ourselves a simple question: Are we transparent about our own system?

The answer was not good enough.

So we paused, documented everything properly, and published a dedicated AI Transparency Page.

The Misconception

Many founders assume that transparency means:

- open-sourcing your code

- exposing system prompts

- revealing proprietary logic

It does not.

You can be transparent without exposing your intellectual property.

How We Structured Our AI Transparency

Here is the exact structure we implemented.

1. Data Privacy First (Zero Data Retention)

We use modern AI models (e.g. Gemini 2.5 Flash), but only through secure enterprise APIs.

This allows us to guarantee:

- user inputs are processed ephemerally

- no customer data is used to train models

- scanned content remains private

This is not just compliance, it is a major trust signal.

2. Strict Prompt Governance

Our AI does not guess.

We engineered strict constraints to ensure:

- structured outputs (`application/json`)

- consistent scoring logic

- minimal hallucination risk

Instead of a creative chatbot, the system behaves as a deterministic analysis engine.

3. Clear System Limitations

AI is powerful, but not perfect.

We explicitly define that ComplianceRadar.dev is an assistive co-pilot, not a replacement for legal professionals.

This is critical for managing expectations, reducing legal risk, and building honest trust.

Why This Matters (Especially for B2B)

An AI Transparency Page is not just a compliance requirement. It is a sales asset.

It tells your clients:

- you understand regulation

- you take data seriously

- your system is engineered responsibly

In many cases, this becomes the difference between "Interesting tool" and "We can actually use this in production".

The Takeaway

If you are building an AI-powered product for the European market, transparency is no longer optional.

You do not need to expose your secrets. But you do need to explain what your system does, how it behaves, and how you protect user data.

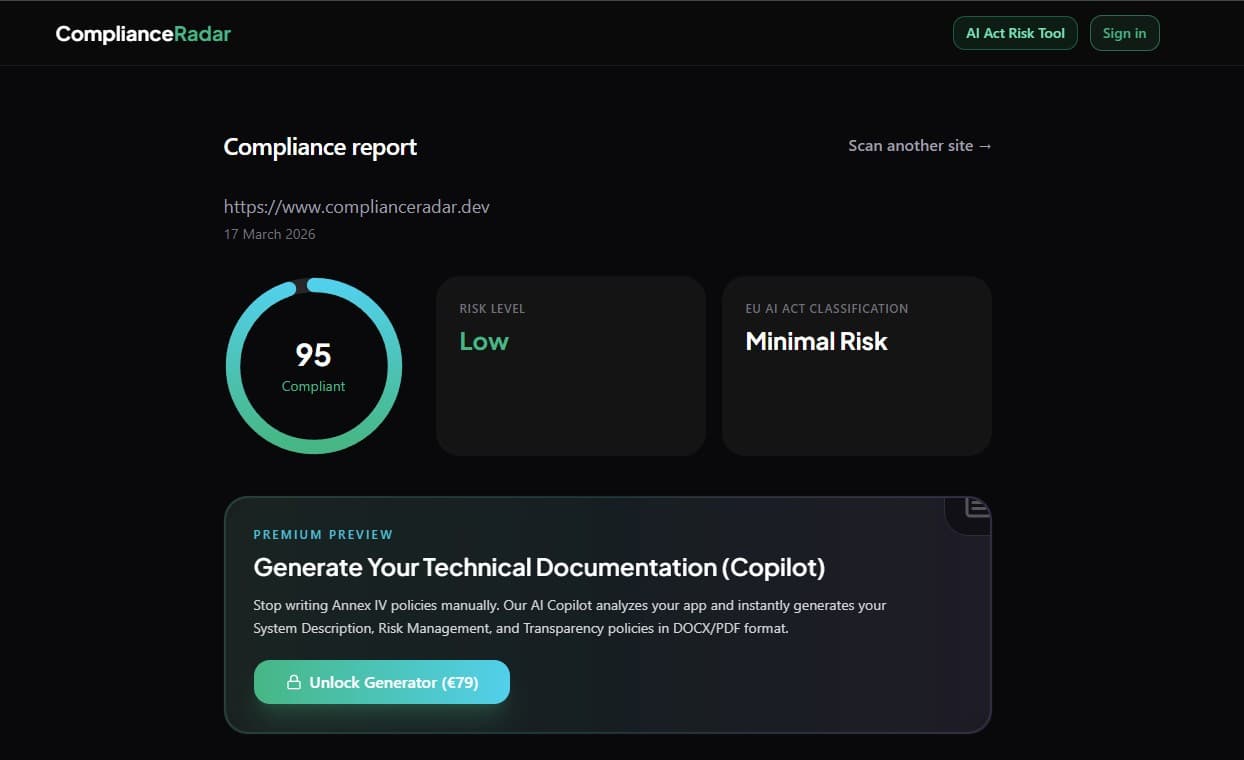

Try it yourself

Curious how your website performs under GDPR, ePrivacy, and the EU AI Act? Run a free scan with ComplianceRadar.dev.

Final Thought

We believe compliance should be visible. That is why we do not just scan other systems. We document our own.

Sources and further reading

- Official text of the EU AI Act (EUR-Lex)

- General Data Protection Regulation (GDPR) Official Text

- ComplianceRadar.dev: EU AI Act Risk Categories Explained

This article is informational and does not constitute legal advice.